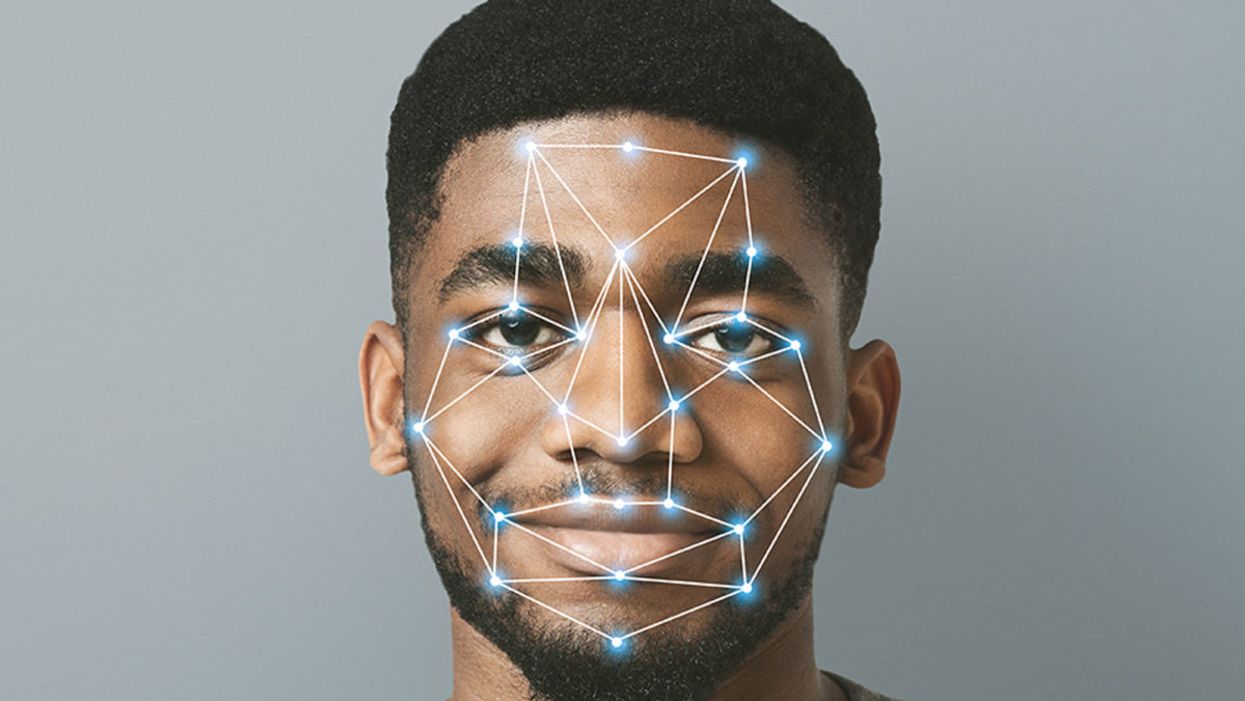

Facial Recognition Can Reduce Racial Profiling and False Arrests

The use of face recognition technology is expanding exponentially right now.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

Opposing facial recognition technology has become an article of faith for civil libertarians. Many who supported the bans in cities like San Francisco and Oakland have declared the technology to be inherently racist and abusive.

The greatest danger would be to categorically oppose this technology and pretend that it will simply go away.

I have spent my career as a criminal defense attorney and a civil libertarian -- and I do not fear it. Indeed, I see it as positive so long as it is appropriately regulated and controlled.

We are living in the beginning of a biometric age, where technology uses our physical or biological characteristics for a variety of products and services. It holds great promises as well as great risks. The greatest danger, however, would be to categorically oppose this technology and pretend that it will simply go away.

This is an age driven as much by consumer as it is government demand. Living in denial may be emotionally appealing, but it will only hasten the creation of post-privacy world. If we do not address this emerging technology, movements in public will increasingly result in instant recognition and even tracking. It is the type of fish-bowl society that strips away any expectation of privacy in our interactions and associations.

The biometrics field is expanding exponentially, largely due to the popularity of consumer products using facial recognition technology (FRT) -- from the iPhone program to shopping ones that recognize customers.

But the privacy community is losing this battle because it is using the privacy rationales and doctrines forged in the earlier electronic surveillance periods. Just as generals are often accused of planning to fight the last war, civil libertarians can sometimes cling to past models despite their decreasing relevance in the current world.

I see FRT as having positive implications that are worth pursuing. When properly used, biometrics can actually enhance privacy interests and even reduce racial profiling by reducing false arrests and the warrantless "patdowns" allowed by the Supreme Court. Bans not only deny police a technology widely used by businesses, but return police to the highly flawed default of "eye balling" suspects -- a system with a considerably higher error rate than top FRT programs.

Officers are often wrong and stop a great number of suspects in the hopes of finding a wanted felon.

A study in Australia showed that passport officers who had taken photographs of subjects in ideal conditions nonetheless experienced high error rates when identifying them shortly afterward, including 14 percent false acceptance rates. Currently, officers stop suspects based on their memory from seeing a photograph days or weeks earlier. They are often wrong and stop a great number of suspects in the hopes of finding a wanted felon. The best FRT programs achieve an astonishing accuracy rate, though real-world implementation has challenges that must be addressed.

One legitimate concern raised in early studies showed higher error rates in recognitions for certain groups, particularly African American women. An MIT study finding that error rate prompted major improvements in the algorithms as well as training changes to greatly reduce the frequency of errors. The issue remains a concern, but there is nothing inherently racist in algorithms. These are a set of computer instructions that isolate and process with the parameters and conditions set by creators.

To be sure, there is room for improvement in some algorithms. Tests performed by the American Civil Liberties Union (ACLU) reportedly showed only an 80 percent accuracy rate in comparing mug shots to pictures of members of Congress when using Amazon's "Rekognition" system. It recently showed the same 80 percent rate in doing the same comparison to members of the California legislators.

However, different algorithms are available with differing levels of performance. Moreover, these products can be set with a lower discrimination level. The fact is that the top algorithms tested by the National Institute of Standards and Technology showed that their accuracy rate is greater than 99 percent.

The greatest threat of biometric technologies is to democratic values.

Assuming a top-performing algorithm is used, the result could be highly beneficial for civil liberties as opposed to the alternative of "eye balling" suspects. Consider the Boston Bombing where police declared a "containment zone" and forced families into the street with their hands in the air.

The suspect, Dzhokhar Tsarnaev, moved around Boston and was ultimately found outside the "containment zone" once authorities abandoned near martial law. He was caught on some surveillance systems but not identified. FRT can help law enforcement avoid time-consuming area searches and the questionable practice of forcing people out of their homes to physically examine them.

If we are to avoid a post-privacy world, we will have to redefine what we are trying to protect and reconceive how we hope to protect it. In my view, the greatest threat of biometric technologies is to democratic values. Authoritarian nations like China have made huge investments into FRT precisely because they know that the threat of recognition in public deters citizens from associating or interacting with protesters or dissidents. Recognition changes conduct. That chilling effect is what we have the worry about the most.

Conventional privacy doctrines do not offer much protection. The very concept of "public privacy" is treated as something of an oxymoron by courts. Public acts and associations are treated as lacking any reasonable expectation of privacy. In the same vein, the right to anonymity is not a strong avenue for protection. We are not living in an anonymous world anymore.

Consumers want products like FaceFind, which link their images with others across social media. They like "frictionless" transactions and authentications using faceprints. Despite the hyperbole in places like San Francisco, civil libertarians will not succeed in getting that cat to walk backwards.

The basis for biometric privacy protection should not be focused on anonymity, but rather obscurity. You will be increasingly subject to transparency-forcing technology, but we can legislatively mandate ways of obscuring that information. That is the objective of the Biometric Privacy Act that I have proposed in recent research. However, no such comprehensive legislation has passed through Congress.

The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We also need to recognize that FRT has many beneficial uses. Biometric guns can reduce accidents and criminals' conduct. New authentications using FRT and other biometric programs could reduce identity theft.

And, yes, FRT could help protect against unnecessary police stops or false arrests. Finally, and not insignificantly, this technology could stop serious crimes, from terrorist attacks to the capturing of dangerous felons. The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We can live and thrive in a biometric era. However, we will need to bring together civil libertarians with business and government experts if we are going to control this technology rather than have it control us.

[Editor's Note: Read the opposite perspective here.]